Parallel computing with dask¶

xarray integrates with dask to support parallel computations and streaming computation on datasets that don’t fit into memory.

Currently, dask is an entirely optional feature for xarray. However, the benefits of using dask are sufficiently strong that dask may become a required dependency in a future version of xarray.

For a full example of how to use xarray’s dask integration, read the blog post introducing xarray and dask.

What is a dask array?¶

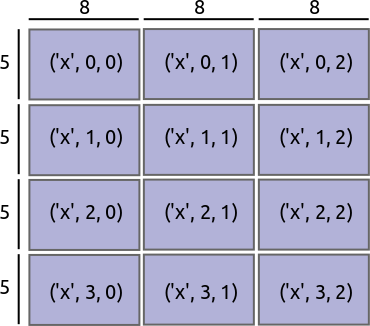

Dask divides arrays into many small pieces, called chunks, each of which is presumed to be small enough to fit into memory.

Unlike NumPy, which has eager evaluation, operations on dask arrays are lazy. Operations queue up a series of tasks mapped over blocks, and no computation is performed until you actually ask values to be computed (e.g., to print results to your screen or write to disk). At that point, data is loaded into memory and computation proceeds in a streaming fashion, block-by-block.

The actual computation is controlled by a multi-processing or thread pool, which allows dask to take full advantage of multiple processors available on most modern computers.

For more details on dask, read its documentation.

Reading and writing data¶

The usual way to create a dataset filled with dask arrays is to load the

data from a netCDF file or files. You can do this by supplying a chunks

argument to open_dataset() or using the

open_mfdataset() function.

In [1]: ds = xr.open_dataset('example-data.nc', chunks={'time': 10})

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

<ipython-input-1-9c7ea69516aa> in <module>()

----> 1 ds = xr.open_dataset('example-data.nc', chunks={'time': 10})

~/checkouts/readthedocs.org/user_builds/xray/conda/v0.10.1/lib/python3.5/site-packages/xarray-0.10.1-py3.5.egg/xarray/backends/api.py in open_dataset(filename_or_obj, group, decode_cf, mask_and_scale, decode_times, autoclose, concat_characters, decode_coords, engine, chunks, lock, cache, drop_variables)

284 store = backends.NetCDF4DataStore.open(filename_or_obj,

285 group=group,

--> 286 autoclose=autoclose)

287 elif engine == 'scipy':

288 store = backends.ScipyDataStore(filename_or_obj,

~/checkouts/readthedocs.org/user_builds/xray/conda/v0.10.1/lib/python3.5/site-packages/xarray-0.10.1-py3.5.egg/xarray/backends/netCDF4_.py in open(cls, filename, mode, format, group, writer, clobber, diskless, persist, autoclose)

273 diskless=diskless, persist=persist,

274 format=format)

--> 275 ds = opener()

276 return cls(ds, mode=mode, writer=writer, opener=opener,

277 autoclose=autoclose)

~/checkouts/readthedocs.org/user_builds/xray/conda/v0.10.1/lib/python3.5/site-packages/xarray-0.10.1-py3.5.egg/xarray/backends/netCDF4_.py in _open_netcdf4_group(filename, mode, group, **kwargs)

197 import netCDF4 as nc4

198

--> 199 ds = nc4.Dataset(filename, mode=mode, **kwargs)

200

201 with close_on_error(ds):

netCDF4/_netCDF4.pyx in netCDF4._netCDF4.Dataset.__init__()

netCDF4/_netCDF4.pyx in netCDF4._netCDF4._ensure_nc_success()

FileNotFoundError: [Errno 2] No such file or directory: b'/home/docs/checkouts/readthedocs.org/user_builds/xray/checkouts/v0.10.1/doc/example-data.nc'

In [2]: ds

������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������Out[2]:

<xarray.Dataset>

Dimensions: (x: 3, y: 5)

Coordinates:

* x (x) int64 0 1 2

Dimensions without coordinates: y

Data variables:

x_only (x) float64 0.2719 -0.425 0.567

x_and_y (x, y) float64 0.4691 -0.2829 -1.509 -1.136 1.212 -0.1732 ...

In this example latitude and longitude do not appear in the

chunks dict, so only one chunk will be used along those dimensions. It

is also entirely equivalent to open a dataset using open_dataset and

then chunk the data use the chunk method, e.g.,

xr.open_dataset('example-data.nc').chunk({'time': 10}).

To open multiple files simultaneously, use open_mfdataset():

xr.open_mfdataset('my/files/*.nc')

This function will automatically concatenate and merge dataset into one in

the simple cases that it understands (see auto_combine()

for the full disclaimer). By default, open_mfdataset will chunk each

netCDF file into a single dask array; again, supply the chunks argument to

control the size of the resulting dask arrays. In more complex cases, you can

open each file individually using open_dataset and merge the result, as

described in Combining data.

You’ll notice that printing a dataset still shows a preview of array values, even if they are actually dask arrays. We can do this quickly with dask because we only need to the compute the first few values (typically from the first block). To reveal the true nature of an array, print a DataArray:

In [3]: ds.temperature

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

<ipython-input-3-e19865245ec8> in <module>()

----> 1 ds.temperature

~/checkouts/readthedocs.org/user_builds/xray/conda/v0.10.1/lib/python3.5/site-packages/xarray-0.10.1-py3.5.egg/xarray/core/common.py in __getattr__(self, name)

174 return source[name]

175 raise AttributeError("%r object has no attribute %r" %

--> 176 (type(self).__name__, name))

177

178 def __setattr__(self, name, value):

AttributeError: 'Dataset' object has no attribute 'temperature'

Once you’ve manipulated a dask array, you can still write a dataset too big to

fit into memory back to disk by using to_netcdf() in the

usual way.

A dataset can also be converted to a dask DataFrame using to_dask_dataframe().

In [4]: df = ds.to_dask_dataframe()

In [5]: df

Out[5]:

Dask DataFrame Structure:

x y x_only x_and_y

npartitions=1

0 int64 int64 float64 float64

14 ... ... ... ...

Dask Name: concat-indexed, 26 tasks

Dask DataFrames do not support multi-indexes so the coordinate variables from the dataset are included as columns in the dask DataFrame.

Using dask with xarray¶

Nearly all existing xarray methods (including those for indexing, computation, concatenating and grouped operations) have been extended to work automatically with dask arrays. When you load data as a dask array in an xarray data structure, almost all xarray operations will keep it as a dask array; when this is not possible, they will raise an exception rather than unexpectedly loading data into memory. Converting a dask array into memory generally requires an explicit conversion step. One noteable exception is indexing operations: to enable label based indexing, xarray will automatically load coordinate labels into memory.

The easiest way to convert an xarray data structure from lazy dask arrays into

eager, in-memory numpy arrays is to use the load() method:

In [6]: ds.load()

Out[6]:

<xarray.Dataset>

Dimensions: (x: 3, y: 5)

Coordinates:

* x (x) int64 0 1 2

Dimensions without coordinates: y

Data variables:

x_only (x) float64 0.2719 -0.425 0.567

x_and_y (x, y) float64 0.4691 -0.2829 -1.509 -1.136 1.212 -0.1732 ...

You can also access values, which will always be a

numpy array:

In [7]: ds.temperature.values

Out[7]:

array([[[ 4.691e-01, -2.829e-01, ..., -5.577e-01, 3.814e-01],

[ 1.337e+00, -1.531e+00, ..., 8.726e-01, -1.538e+00],

...

# truncated for brevity

Explicit conversion by wrapping a DataArray with np.asarray also works:

In [8]: np.asarray(ds.temperature)

Out[8]:

array([[[ 4.691e-01, -2.829e-01, ..., -5.577e-01, 3.814e-01],

[ 1.337e+00, -1.531e+00, ..., 8.726e-01, -1.538e+00],

...

Alternatively you can load the data into memory but keep the arrays as

dask arrays using the persist() method:

This is particularly useful when using a distributed cluster because the data will be loaded into distributed memory across your machines and be much faster to use than reading repeatedly from disk. Warning that on a single machine this operation will try to load all of your data into memory. You should make sure that your dataset is not larger than available memory.

For performance you may wish to consider chunk sizes. The correct choice of

chunk size depends both on your data and on the operations you want to perform.

With xarray, both converting data to a dask arrays and converting the chunk

sizes of dask arrays is done with the chunk() method:

In [9]: rechunked = ds.chunk({'latitude': 100, 'longitude': 100})

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-9-fb97d58e55e9> in <module>()

----> 1 rechunked = ds.chunk({'latitude': 100, 'longitude': 100})

~/checkouts/readthedocs.org/user_builds/xray/conda/v0.10.1/lib/python3.5/site-packages/xarray-0.10.1-py3.5.egg/xarray/core/dataset.py in chunk(self, chunks, name_prefix, token, lock)

1263 if bad_dims:

1264 raise ValueError('some chunks keys are not dimensions on this '

-> 1265 'object: %s' % bad_dims)

1266

1267 def selkeys(dict_, keys):

ValueError: some chunks keys are not dimensions on this object: ['longitude', 'latitude']

You can view the size of existing chunks on an array by viewing the

chunks attribute:

In [10]: rechunked.chunks

---------------------------------------------------------------------------

NameError Traceback (most recent call last)

<ipython-input-10-0838c1f4b1cd> in <module>()

----> 1 rechunked.chunks

NameError: name 'rechunked' is not defined

If there are not consistent chunksizes between all the arrays in a dataset

along a particular dimension, an exception is raised when you try to access

.chunks.

Note

In the future, we would like to enable automatic alignment of dask chunksizes (but not the other way around). We might also require that all arrays in a dataset share the same chunking alignment. Neither of these are currently done.

NumPy ufuncs like np.sin currently only work on eagerly evaluated arrays

(this will change with the next major NumPy release). We have provided

replacements that also work on all xarray objects, including those that store

lazy dask arrays, in the xarray.ufuncs module:

In [11]: import xarray.ufuncs as xu

In [12]: xu.sin(rechunked)

---------------------------------------------------------------------------

NameError Traceback (most recent call last)

<ipython-input-12-a226ec9db957> in <module>()

----> 1 xu.sin(rechunked)

NameError: name 'rechunked' is not defined

To access dask arrays directly, use the new

DataArray.data attribute. This attribute exposes

array data either as a dask array or as a numpy array, depending on whether it has been

loaded into dask or not:

In [13]: ds.temperature.data

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

<ipython-input-13-e3ed9ed43214> in <module>()

----> 1 ds.temperature.data

~/checkouts/readthedocs.org/user_builds/xray/conda/v0.10.1/lib/python3.5/site-packages/xarray-0.10.1-py3.5.egg/xarray/core/common.py in __getattr__(self, name)

174 return source[name]

175 raise AttributeError("%r object has no attribute %r" %

--> 176 (type(self).__name__, name))

177

178 def __setattr__(self, name, value):

AttributeError: 'Dataset' object has no attribute 'temperature'

Note

In the future, we may extend .data to support other “computable” array

backends beyond dask and numpy (e.g., to support sparse arrays).

Automatic parallelization¶

Almost all of xarray’s built-in operations work on dask arrays. If you want to

use a function that isn’t wrapped by xarray, one option is to extract dask

arrays from xarray objects (.data) and use dask directly.

Another option is to use xarray’s apply_ufunc(), which can

automate embarrassingly parallel

“map” type operations where a functions written for processing NumPy arrays

should be repeatedly applied to xarray objects containing dask arrays. It works

similarly to dask.array.map_blocks() and dask.array.atop(),

but without requiring an intermediate layer of abstraction.

For the best performance when using dask’s multi-threaded scheduler, wrap a function that already releases the global interpreter lock, which fortunately already includes most NumPy and Scipy functions. Here we show an example using NumPy operations and a fast function from bottleneck, which we use to calculate Spearman’s rank-correlation coefficient:

import numpy as np

import xarray as xr

import bottleneck

def covariance_gufunc(x, y):

return ((x - x.mean(axis=-1, keepdims=True))

* (y - y.mean(axis=-1, keepdims=True))).mean(axis=-1)

def pearson_correlation_gufunc(x, y):

return covariance_gufunc(x, y) / (x.std(axis=-1) * y.std(axis=-1))

def spearman_correlation_gufunc(x, y):

x_ranks = bottleneck.rankdata(x, axis=-1)

y_ranks = bottleneck.rankdata(y, axis=-1)

return pearson_correlation_gufunc(x_ranks, y_ranks)

def spearman_correlation(x, y, dim):

return xr.apply_ufunc(

spearman_correlation_gufunc, x, y,

input_core_dims=[[dim], [dim]],

dask='parallelized',

output_dtypes=[float])

The only aspect of this example that is different from standard usage of

apply_ufunc() is that we needed to supply the output_dtypes arguments.

(Read up on Wrapping custom computation for an explanation of the

“core dimensions” listed in input_core_dims.)

Our new spearman_correlation() function achieves near linear speedup

when run on large arrays across the four cores on my laptop. It would also

work as a streaming operation, when run on arrays loaded from disk:

In [14]: rs = np.random.RandomState(0)

In [15]: array1 = xr.DataArray(rs.randn(1000, 100000), dims=['place', 'time']) # 800MB

In [16]: array2 = array1 + 0.5 * rs.randn(1000, 100000)

# using one core, on numpy arrays

In [17]: %time _ = spearman_correlation(array1, array2, 'time')

CPU times: user 21.6 s, sys: 2.84 s, total: 24.5 s

Wall time: 24.9 s

In [18]: chunked1 = array1.chunk({'place': 10})

In [19]: chunked2 = array2.chunk({'place': 10})

# using all my laptop's cores, with dask

In [20]: r = spearman_correlation(chunked1, chunked2, 'time').compute()

In [21]: %time _ = r.compute()

CPU times: user 30.9 s, sys: 1.74 s, total: 32.6 s

Wall time: 4.59 s

One limitation of apply_ufunc() is that it cannot be applied to arrays with

multiple chunks along a core dimension:

In [22]: spearman_correlation(chunked1, chunked2, 'place')

ValueError: dimension 'place' on 0th function argument to apply_ufunc with

dask='parallelized' consists of multiple chunks, but is also a core

dimension. To fix, rechunk into a single dask array chunk along this

dimension, i.e., ``.rechunk({'place': -1})``, but beware that this may

significantly increase memory usage.

The reflects the nature of core dimensions, in contrast to broadcast (non-core) dimensions that allow operations to be split into arbitrary chunks for application.

Tip

For the majority of NumPy functions that are already wrapped by dask, it’s

usually a better idea to use the pre-existing dask.array function, by

using either a pre-existing xarray methods or

apply_ufunc() with dask='allowed'. Dask can often

have a more efficient implementation that makes use of the specialized

structure of a problem, unlike the generic speedups offered by

dask='parallelized'.

Chunking and performance¶

The chunks parameter has critical performance implications when using dask

arrays. If your chunks are too small, queueing up operations will be extremely

slow, because dask will translates each operation into a huge number of

operations mapped across chunks. Computation on dask arrays with small chunks

can also be slow, because each operation on a chunk has some fixed overhead

from the Python interpreter and the dask task executor.

Conversely, if your chunks are too big, some of your computation may be wasted, because dask only computes results one chunk at a time.

A good rule of thumb to create arrays with a minimum chunksize of at least one million elements (e.g., a 1000x1000 matrix). With large arrays (10+ GB), the cost of queueing up dask operations can be noticeable, and you may need even larger chunksizes.

Optimization Tips¶

With analysis pipelines involving both spatial subsetting and temporal resampling, dask performance can become very slow in certain cases. Here are some optimization tips we have found through experience:

- Do your spatial and temporal indexing (e.g.

.sel()or.isel()) early in the pipeline, especially before callingresample()orgroupby(). Grouping and rasampling triggers some computation on all the blocks, which in theory should commute with indexing, but this optimization hasn’t been implemented in dask yet. (See dask issue #746). - Save intermediate results to disk as a netCDF files (using

to_netcdf()) and then load them again withopen_dataset()for further computations. For example, if subtracting temporal mean from a dataset, save the temporal mean to disk before subtracting. Again, in theory, dask should be able to do the computation in a streaming fashion, but in practice this is a fail case for the dask scheduler, because it tries to keep every chunk of an array that it computes in memory. (See dask issue #874) - Specify smaller chunks across space when using

open_mfdataset()(e.g.,chunks={'latitude': 10, 'longitude': 10}). This makes spatial subsetting easier, because there’s no risk you will load chunks of data referring to different chunks (probably not necessary if you follow suggestion 1).